Here's what happened to people's brains after receiving a robotic 'third thumb' / Humans + Tech - #81

+ Everything you’ve read about the ill-effects of screen time might be based on bad data + Google's new photo technology is creepy as hell – and seriously problematic + Other interesting articles

Hi,

Do you ever wonder if your phone is listening to you? If so, there is a link to NordVPN’s blog in the ‘Other interesting articles from around the web’ section below, which shows you how to test it out.

Here's what happened to people's brains after receiving a robotic 'third thumb'

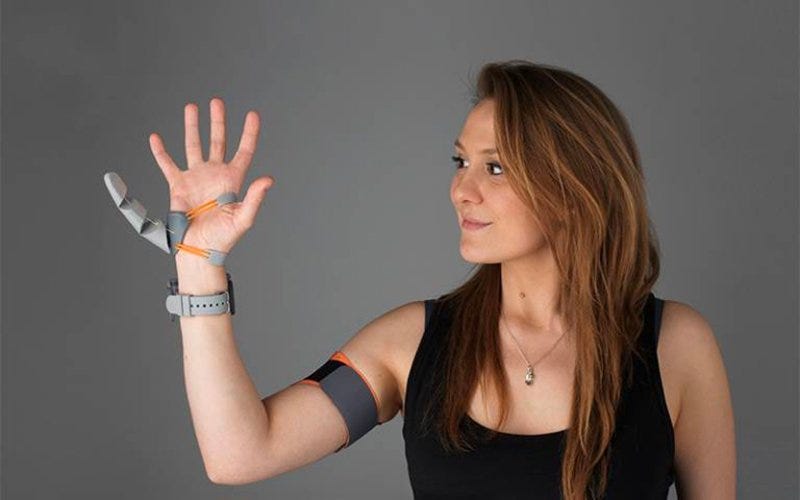

Researchers at University College London (UCL) asked 20 people to wear an extra thumb for six hours a day for five days. The 3D-printed thumb was attached to one hand and wirelessly controlled by pressure from the big toes [David Nield, Science Alert].

The people testing it out were able to operate it naturally in just a few days. Neural scans showed that the brain had adapted to the presence of the extra thumb, and the brain had altered its representation of flesh and blood fingers.

Understanding what's going on here is crucial for improving our bodies' relationships with tools, robotic devices, and prosthetics. While these methods of augmentation can be incredibly useful, we need to understand their impact on our brains.

"Our study shows that people can quickly learn to control an augmentation device and use it for their benefit, without overthinking," says designer and research technician Dani Clode from University College London (UCL) in the UK, who made the Third Thumb.

"We saw that while using the Third Thumb, people changed their natural hand movements, and they also reported that the robotic thumb felt like part of their own body."

There are a couple of videos at the link too. It’s fascinating that our brains can adapt to the presence of a new synthetic body part so quickly. It’s a massive advantage for the physically disabled if prosthetics can become part of their natural movement patterns without extra effort once the brain adapts.

The researchers say they need to do further research to understand the impacts on the brain with more advanced prosthetics or even augmentations to humans that give them additional capabilities.

Everything you’ve read about the ill-effects of screen time might be based on bad data

Researchers collaborated and revisited 12,000 studies on screen time for a new meta-analysis in Nature Human Behaviour. They found 47 studies in which 50,000 people had their screen time logged or tracked in addition to self-reporting it. They found that only 5% of the self-reports were accurate [Arianne Cohen, Fast Company].

The intent behind the studies were good. Rather than analyse screen minutes in a lab, the researchers wanted to base the studies on people’s regular day-to-day screen use. However, a screen being on doesn’t mean it’s being used, and that’s just one of the scenarios through which bad data is accumulated.

That’s bad. As the authors put it, “Self-reported media use correlates only moderately with logged measurements.” That’s like someone telling you that she slept four hours last night, and you later learning that she may have slept three hours, or six hours, or seven hours. Multiply that by 50,000 people. You see the conundrum.

Excessive screen use may still be harmful to us. But the results from these faulty studies should not be used to make decisions. Unfortunately, countries like the UK and Canada are already basing policies around the results of these studies.

Google's new photo technology is creepy as hell – and seriously problematic

At Google I/O 2021, they announced several updates to Google Photos, which are unnecessarily invasive and even alter reality [John Loeffler, TechRadar].

The first is a feature in which Google automatically analyses your photos and points out similarities that may otherwise go unnoticed.

Which is to say that Google is running every photo you give it through some very specific machine learning algorithms and identifying very specific details about your life, like the fact that you like to travel around the world with a specific orange backpack, for example.

Fortunately, Google at least acknowledges that this could be problematic if, say, you are transgender and Google's algorithm decides that it wants to create a collection featuring photos of you that include those that don't align with your gender identity. Google understands that this could be painful for you, so you totally have the option of removing the offending photo from collections going forward. Or, you can tell it to remove any photos taken on a specific date that might be painful, like the day a loved one died.

A key point to note here is that it is up to you as a user to go and manually remove items. Google is going to include everything by default.

The other feature that is effectively Google Photos altering reality is where it takes a sequence of images taken together and uses machine learning to add entirely fabricated frames in between those photos to generate a GIF to simulate the live event.

Google presents this as helping you reminisce over old photos, but that's not what this is – it's the beginning of the end of reminiscence as we know it. Why rely on your memory when Google can just generate one for you? Never mind the fact that it is creating a record of something that didn't actually happen and effectively presenting it to you as if it did.

I agree with the author, John Loeffler’s opinion, ”Not everything needs machine learning.”

Other interesting articles from around the web

👀 How to test if your phone is spying on you [Carlos Martinez, NordVPN]

I can identify at least two times in the last six months that I’m sure that one of the devices in my home was listening in on conversations to show relevant ads. Carlos Martinez at NordVPN has outlined how you can test this out.

His results were mixed, but test it out for yourself. Your results may be different based on what phone you have and which apps you’ve installed.

🤖 Is it time for an algorithm bill of rights? These analysts think so [Connie Lin, Fast Company]

Two scholars from Carnegie Mellon University argue that businesses are obligated to share the secrets behind their algorithmic processes.

Then there are applications that carry decisions with heavier consequences, such as what news stories propagate furthest or which resumes rise to the top of the pile for a job opportunity. As algorithms shape the trajectory of our lives in increasingly profound ways, some researchers think companies have a new moral duty to illuminate how, exactly, they work.

Forcing private companies to reveal their secrets may not be practical, and I personally need to think about this aspect a little more. We get into trade secret territory here, and it can be difficult to force this without harming competition.

I agree that with public services, such as algorithms used in the justice or law systems and algorithms used in governmental services, there needs to be transparency into how the algorithms work and how they arrive at their decisions.

🤳 Twitter's photo crop algorithm favours white faces and women [Khari Johnson, WIRED]

Twitter has stopped using its image-cropping algorithm on its mobile app after realising the algorithms are biased towards white faces and women.

Researchers found bias when the algorithm was shown photos of people from two demographic groups. Ultimately, the algorithm picks one person whose face will appear in Twitter timelines, and some groups are better represented on the platform than others. When researchers fed a picture of a Black man and a white woman into the system, the algorithm chose to display the white woman 64 percent of the time and the Black man only 36 percent of the time, the largest gap for any demographic groups included in the analysis. For images of a white woman and a white man, the algorithm displayed the woman 62 percent of the time. For images of a white woman and a Black woman, the algorithm displayed the white woman 57 percent of the time.

Quote of the week

But when dealing with what is ethical and what is not, there isn't any room for "kinda". These are the kinds of choices that lead down paths we don't want to go down, and every step down a path makes it harder for us to turn back.

—John Loeffler, from the article, “Google's new photo technology is creepy as hell – and seriously problematic” [TechRadar]

I wish you a brilliant day ahead :)

Neeraj